The policy of search engines, and key advertising platforms is becoming tougher day by day. The current goals of the global players on the Internet are aimed at improving the user experience and increasing the level of security. And such a trend is not in the interests of many affiliate marketing affiliates.

If earlier Google could pessimize or exclude a site from issuing a site for the outright scam, now it applies sanctions for less serious offenses as well. Medicinal products without evidence of effectiveness are sold – in a ban, dubious crypt – in a ban, gambling without a suitable license – in the same place. Moreover, the latter is a particularly unpleasant point, because almost 80% of the entire gambling vertical in affiliate marketing is based on offers from online casinos and bookmakers with the famous Curacao license. Of course, for those who are in those, of course, that the Curacao license is an empty phrase, this licensing authority does not apply any real serious regulators. But before Google turned a blind eye to such a license, and now it does not suit him.

In connection with all the changes, it has become more difficult for many sites with characteristic content to compete for search results. Moreover, the competition is constantly growing. Therefore, cloaking often becomes a way out for them.

In this review, we will consider this method again, we will analyze the entire site cloaking algorithm. We have touched on this topic more than once, we have studied how to use cloaking in affiliate marketing with social and advertising networks, as well as cloaking affiliate links. Now we finally got to the site cloaking.

Table of contents

Types of SEO cloaking

A similar term often means two concepts at once. And, while technically they don’t have huge differences, the goals are seriously different.

The first type is SEO cloaking. And it is worth noting that this is precisely the very first type of cloaking that appeared on the Internet and gave its name to the entire field. This is a way to show search engine bots and users different landing pages for one search query.

The second type is site cloaking with illegal content on the page. This is a more dangerous method, after all, but it is also more necessary. After all, if you do not use SEO cloaking, then you will lower your position for various queries from the semantic core as much as possible. Yes, maybe lower it a lot, but there is always an alternative. Modern SEO methods work on different principles, you can easily promote a page by increasing the authority of the site, the EAT level, optimizing the page load speed, working with the behavioral factor, and so on. There is a way out, maybe more expensive and painstaking, but there is. But if there is prohibited content on the page, there are no more options. Either cloaking, or you fly out of the search engine indexes on the first visit by crawlers.

Why do you need cloaking on the site?

Let’s take a look at what this technique will bring you.

- a potential opportunity to increase your position in search results (why “potential”, we will tell below);

- the ability to get traffic for illegal, from the point of view of the search engine, offers;

- weed out unnecessary users;

- improve conversion rates on affiliate links (if you use specific link cloaking).

Is cloaking legal

Well, from the point of view of the direct legislation of almost any country, cloaking itself is not considered a crime or offense. Illegal content – here it can be different, for one you will get maximum pessimization, for the other – interest from human rights structures.

Cloaking itself is considered illegal only from the point of view of the one against whom it is applied. That is, advertising and social networks (more precisely, their moderators and bots), and in our case, Google.

But the search engine also provides for different sanctions depending on the depth of the offense of the offender. Conventionally, these levels can also be divided into 3 parts.

The first is white cloaking. This is work on optimizing the page for search robots. And the final version is less readable text than it could be without SEO. Therefore, the webmaster creates a second page where he enters the same information (or even more complete), but without optimization. After the transition, the user fully satisfies his request interest and receives complete and detailed information. And in principle, remains satisfied. The search engine, seeing a behavioral characteristic, may not only initiate sanctions but even, on the contrary, increase its position in the search results. But we will warn you right away – the decision remains at the discretion of the moderators and Google algorithms. And they sometimes behave unpredictably even for experienced optimizers. Accordingly, there can always be the opposite effect.

The second is gray cloaking. The user receives the same information, but not in fullness, not as accurately as optimization for bots implies. That is if the white page is spammed with the keyword “how to assemble a loft bed with your own hands from improvised materials”, and similar keywords from the core, the search engine evaluates the information as relevant for queries. And the transitioning user on the black page receives an advertising text about buying beds in a particular store. Or it just ends up in a product showcase. It seems that the request is partially satisfied, but not at all in the way the user planned. Here, if the scheme is revealed, the consequences will be negative. Decreases in position by keys, but not a ban.

The third is black cloaking. Completely irrelevant information on the page, casino ads along with addresses of kindergartens in the area, and so on. Or illegal content in terms of search. Inevitable ban and exclusion from the indexes, as a result, if a forgery is revealed.

Is site cloaking effective?

But this is an interesting question. If we compare the effectiveness with the search engine algorithms of 10-15 years ago – without a doubt, an effective and efficient technique. Now it’s more no than yes. Remember we said that in white cloaking methods, you can give the user reference content, and provide just a spammy page for bots. So, in 2022, you won’t need it. Reference content > than full keyword optimization. Behavioral characteristics play a bigger role than key spam or even LSI. Yes, and you can’t completely avoid the keys. Plus, “clumsy” form requests are no longer relevant. That is, if the user enters something like “buy a cheap vacuum cleaner with delivery in 5 minutes”, then the page where “buy cheap vacuum cleaners with delivery no more than 5 minutes” is found will be relevant. human,

Therefore, if you have the opportunity to fill the site with reference content that users will 100% like, you do not need SEO cloaking. But it is expensive, long and not everyone can handle it. Even among professional copywriters, there are not so many masters of their craft who can interest the reader in a banal text or find accurate and up-to-date information on a topic.

Setting up SEO cloaking – guide

Okay, we’ve got the preliminaries out of the way. Now you can decide for yourself whether you need such a technique. Of course, if you are driving search traffic directly to gray offers, you cannot do without it. If you do not have funds for competent content – too. In all other cases, we would recommend refraining from it.

So, let’s move on to the step-by-step algorithm work.

Creation of white and black pages

First, you need to determine exactly how many pages you are going to clone. If you are driving to a specific gray offer, then you just need to focus all your attention on one landing page. But remember that you still don’t optimize one landing for many queries. As a result, you will need one black page and many white pages.

How it works:

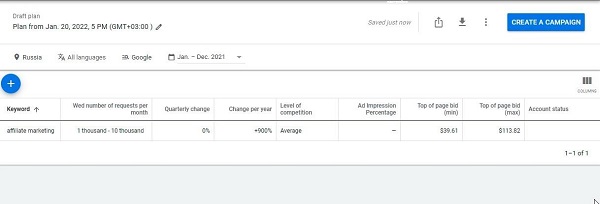

- you make up the semantic core for which you are going to work. These are all keywords and phrases related to the main query that defines the topic. You can use the keyword planner from Google or other services;

- for each high-frequency, you set one page, for mid-frequency one for 4-5 requests, for low-frequency one for 10-15. Atthis keys can and should be combined based on the search intent;

- then we form optimized content for each request. The content is not important, even machine translation will do, the main thing is the required number of key queries and LSI words;

- for all received pages we set one clone – the black page, which is the landing page of our offer.

It should be understood that if you select key queries that are far from the offer on the landing page, then the percentage of instant bounces will be catastrophically high. On the other hand, if the queries in the semantic core correspond to the theme of the landing, then the coverage of the keys will be much narrower and, of course, there will be less traffic.

What option to choose? Only the second is to select the semantic core in thematic accordance with the offer. After all, the percentage of instant failures will roughly balance the amount of traffic, plus or minus an equal indicator. But the behavioral characteristics will be heavily corrupted in the eyes of Google. That is, the search engine will instantly open the schemes simply due to the constant departure of users from the page after the transition. And even if you produce a white page every day in large volumes, the site itself will go under the ban, and you can turn off the shop.

If we do not promote a gray offer, but simply work to increase the amount of traffic and improve positions for our thematic queries, that is, SEO cloaking, then the system becomes completely different.

- we also constitute the semantic core. Only now not for the offer landing, but the entire site as a whole. To begin with, you need a basic core, because it will be constantly supplemented, the site must be updated permanently, otherwise, the search engine will start expelling it from the achieved positions;

- we also create a white page with optimized spam;

- for each white page, we construct a separate black page. Therefore, their number at the end should be identical;

- the white page is already filled not optimized, but the information we need, often selling texts.

We upload all the created pages to the hosting and we can proceed to the second part.

Choice of Cloaker

While we are talking purely about working with a Cloaker. Next, we will clarify the principles of manual cloaking, but we will immediately warn you – that it has low efficiency. If SEO cloaking, in principle, is no longer on horseback, then its manual version is, in principle, a waste of time.

A Cloaker is a special service that is used to automate and improve the cloaking process. In fact, with the right settings, it will do everything for you. You only need to provide downloaded pages and their clones.

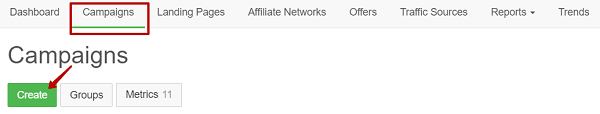

Almost all Сloakers work on the same technology. To begin with, we create a new campaign directly through the utility.

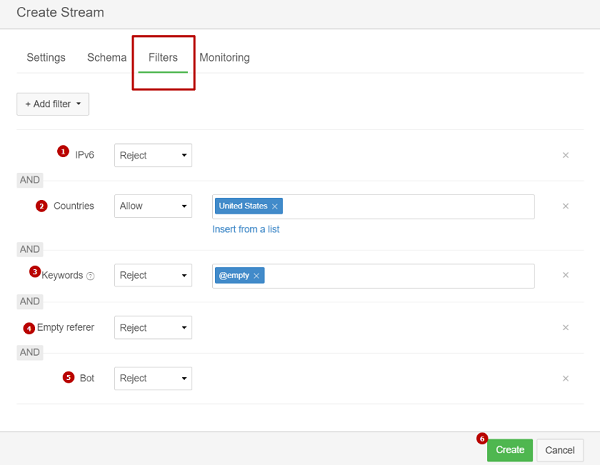

Next, we create a stream that we are going to send to the white page. Usually, different filters are available for this

- by IP type;

- geographic factor;

- country;

- a key request of the transition;

- block or skip empty transitions;

- block or let bots through.

Obviously, for cloaking in a search engine, we need only one thing – to understand whether it is a bot or a person. And this is done in two main ways. By user-agent name or address. The first option, which will reveal the open secret, does not work. Google has long been able to bypass such blocking for crawlers.

But forwarding by IP address is still effective. And any good Cloaker can do it if… you give him these bot addresses. Yes, yes, not a single legal Cloaker has its bot database. Illegal ones, of which there are also enough on the network, have. But they often cost more. Yes, and the relevance of their databases is different, it is not so easy to choose a worthwhile one.

The meaning is simple, after the transition, the Cloaker automatically checks the address and finds out who came to us, a user or a search bot. The first goes to the black page, the second goes to the white page. Therefore, our next step is to search the database.

Searching for a database of addresses of search engine bots

If you could not / wanted to use the Cloaker with the current database or simply did not find it, then you will have to look for information separately.

Up-to-date databases of IP addresses are also distributed through the network as a commodity, on specialized exchanges, Telegram channels, or even on the Dark Web. We will not publish any links to thematic communities, but a simple search for the relevant keys will be enough. For the Darknet, it is recommended to use thematic search engines, and not the general ones, in the style of DuckDuckGo.

However, you can easily find typical address databases on Google, and even for free. But the relevance of this information is often in doubt. Therefore, high-quality bot databases are almost always paid for.

You can also combine paid trackers and Сloakers. The former will give you excellent functionality that allows you to easily set up redirects even with a low level of competence in the field, and the latter will give you the database of bot addresses itself. For example, good old Binom looks good for such a role.

By the way, this only applies to crawlers. Spy bots that can be sent by competitors and scammers are not blocked this way. After all, no one provides a database of their addresses; it does not exist on the network. True, advanced Сloakers have their options that protect against spy bots. Not with 100% probability, no one can guarantee this, but the percentage is significantly reduced. Cloakers filter up to 90% of regular spy bots.

System test

After preparing landing pages and their clones, as well as setting up a Cloaker and downloading an up-to-date database of addresses, it’s time for tests. This is a pretty simple step. We just need to evaluate the performance of the system, and at the same time, you will understand whether you have loaded a good database of addresses.

Therefore, test runs are recommended to be done using harmless clones. If you later check the statistics and realize that the search bots were not correctly identified, you went to the black page, but you don’t risk anything. For the test, in principle, you can even use a full clone of the white page with the same content, just with a different address. After all, if you launch the system right away, and then it turns out that crawlers freely got to the black page with illegal offers, then the site will simply go under the ban.

Manual methods of SEO cloaking

All the tricks come down to a banal invisible text. That is, you leave purely optimized content on the page, but hide it from users. Robots see it, and index it, but people do not.

This is achieved with:

- text color that exactly matches the background color. The content is invisible, but it has its own space, which can be selected with the mouse. Then the highlighted text will become visible;

- minimum font size. For the user, visually, this is a solid bottom line, no more, it does not attract much attention;

- moving text outside CSS tables. Just keep in mind that such content may be displayed differently for mobile users and desktop visitors.

But all the described methods have a lot of disadvantages. Deterioration of behavioral characteristics, incorrect display of site elements, and search bots are already quite tolerably able to determine whether the text was invisible or not. Plus, you are not submitting fully optimized content for search engine verification, but only additional optimized parts of it. SEO content should be evenly distributed throughout the text. Therefore, the only option is to fill in inconvenient keywords in the invisible text, which simply cannot be inserted into the text without losing readability. But as we have already clarified, such requests now do not have to be inserted in the exact word form.

Recommendations for site cloaking

We have put together a few practical tips that will help you better understand how to cloak your site (and whether it is worth doing in principle):

- of all types of site cloaking, only affiliate link cloaking can be called strictly necessary and almost always appropriate. So you bring them into a more visually pleasing form, increase the credibility of your resource, protect my affiliate commission from the actions of intruders, and encrypt the address inside the link. Cloaking when placing illegal content on your site is necessary because there are simply no other alternatives. Unless, of course, you count on search traffic. You can also drive customers from other sources, completely forgetting about Google. For example, by creating advertising campaigns in advertising networks. But even in this case, most platforms will first check your resource so as not to be complicit in spreading the scam. And here it all depends on the rules of the platform. Social networks, for example, will cover the RK that leads to a site with an adult, but many advertising networks will not, among them there are even purely niche projects in this vertical;

- SEO cloaking is currently not an effective technique. Its only advantage is that it is cheap compared to filling a large site with reference content. But he has a lot of downsides. Spamming with keys does not work well, search engines quickly calculate cloaking, and texts for people with a minimal SEO component eventually give a more effective output. Plus, the text content itself is no longer decisive. There are many other options – EAT, Core Web Vitals, quality topic links, logical internal linking, optimization for mobile display, clear structure, and so on. The combination of these parameters has much more weight than the quality of text content and optimization in general;

- Now search intent is of great importance, which means that texts should be written not for a specific request, but the desire of the user. What he wanted to say with this request, what he wanted to find. Accordingly, the value of optimization for the exact key is increasingly reduced.

Conclusion

What do we see as a result? SEO is alive, but SEO cloaking is dying. And this is the expected outcome. It is worth doing it only if you fill the pages of the site with content for which Google will simply strike you out of the indexes. And even then, maybe in this case it is more logical to work simply through traffic from advertising platforms, and not with search engines? After all, if cloaking is detected, in the first case, only your advertising campaign will be affected. And in the second – the entire site.

Therefore, our opinion is unequivocal, cloaking is a great method that will allow you to hide an unwanted offer from the eyes of moderators and bots, protect you from competitors’ actions or filter out target users. But for the SEO component, this is not the best choice.